Main Concept

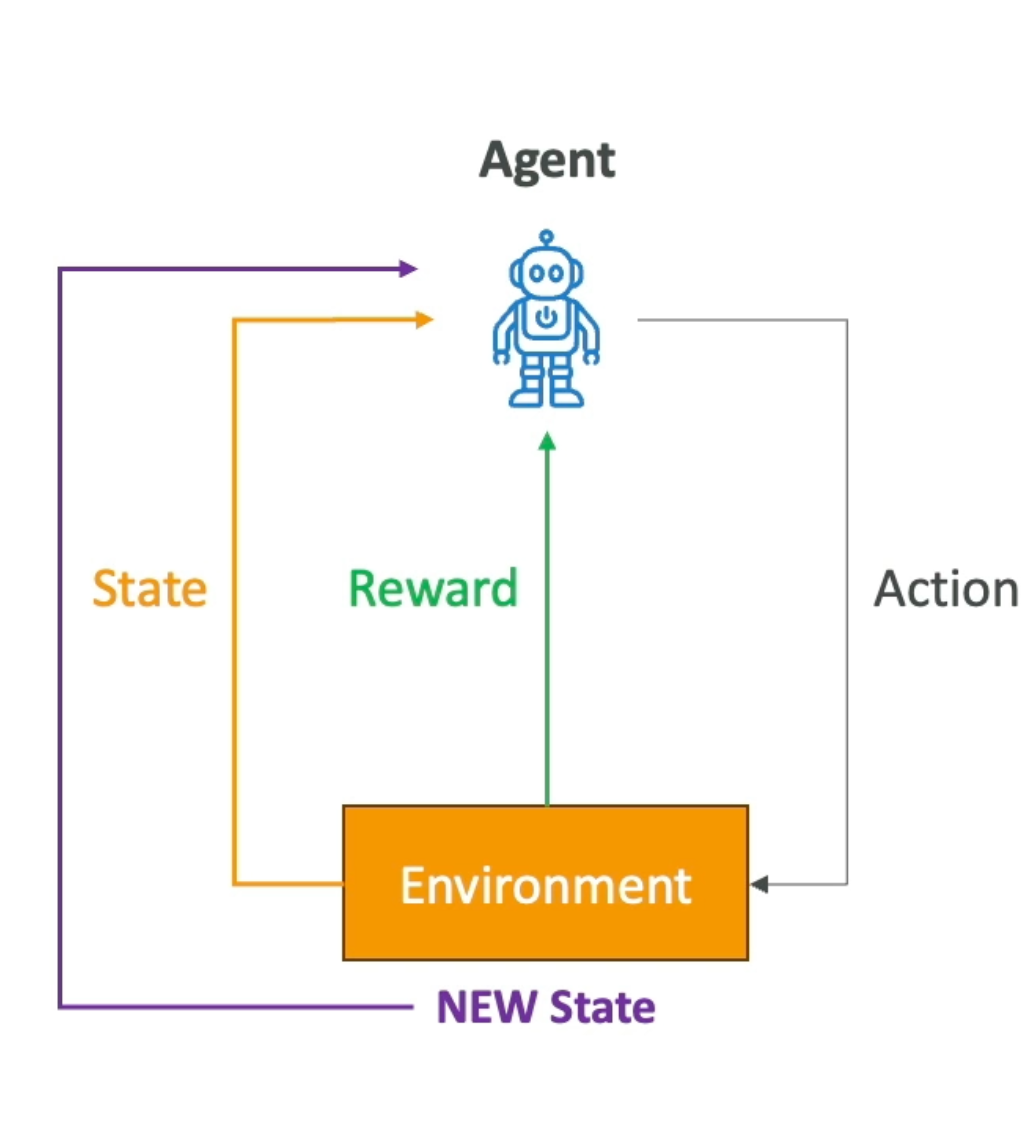

Reinforcement Learning is a type of Machine Learning where an agent learns to make decisions by interacting with an environment. The agent receives feedback in the form of rewards or penalties for each action it takes, and over time learns the strategy (policy) that maximizes cumulative reward.

Unlike Supervised Learning, there are no labeled examples — the agent discovers the right behavior entirely through trial and error.

Key Concepts

| Concept | Description |

|---|---|

| Agent | The learner or decision-maker |

| Environment | The external system the agent interacts with |

| State | The current situation of the environment |

| Action | A choice made by the agent |

| Reward | Feedback from the environment based on the action taken |

| Policy | The strategy the agent uses to select actions given a state |

How It Works

- The Agent observes the current State of the Environment

- It selects an Action based on its current Policy

- The Environment transitions to a new State and returns a Reward

- The Agent updates its Policy based on the reward received

- This loop repeats — Goal: maximize cumulative reward over time

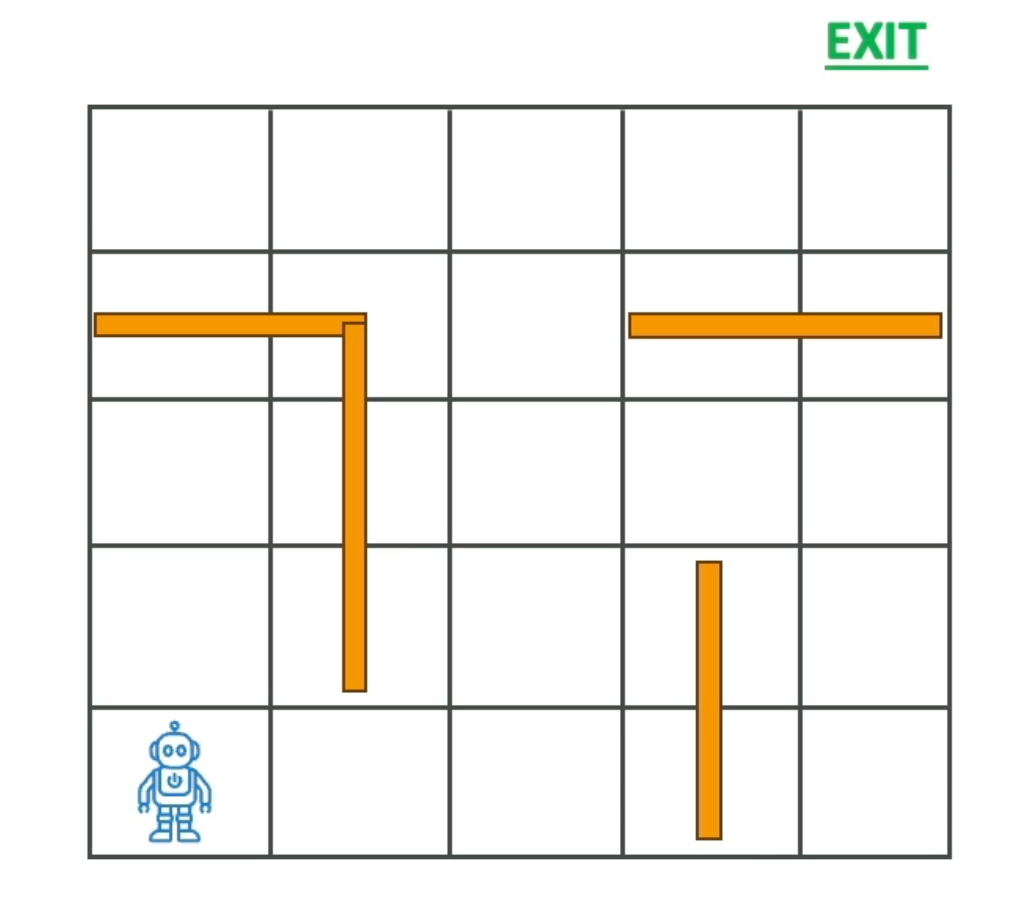

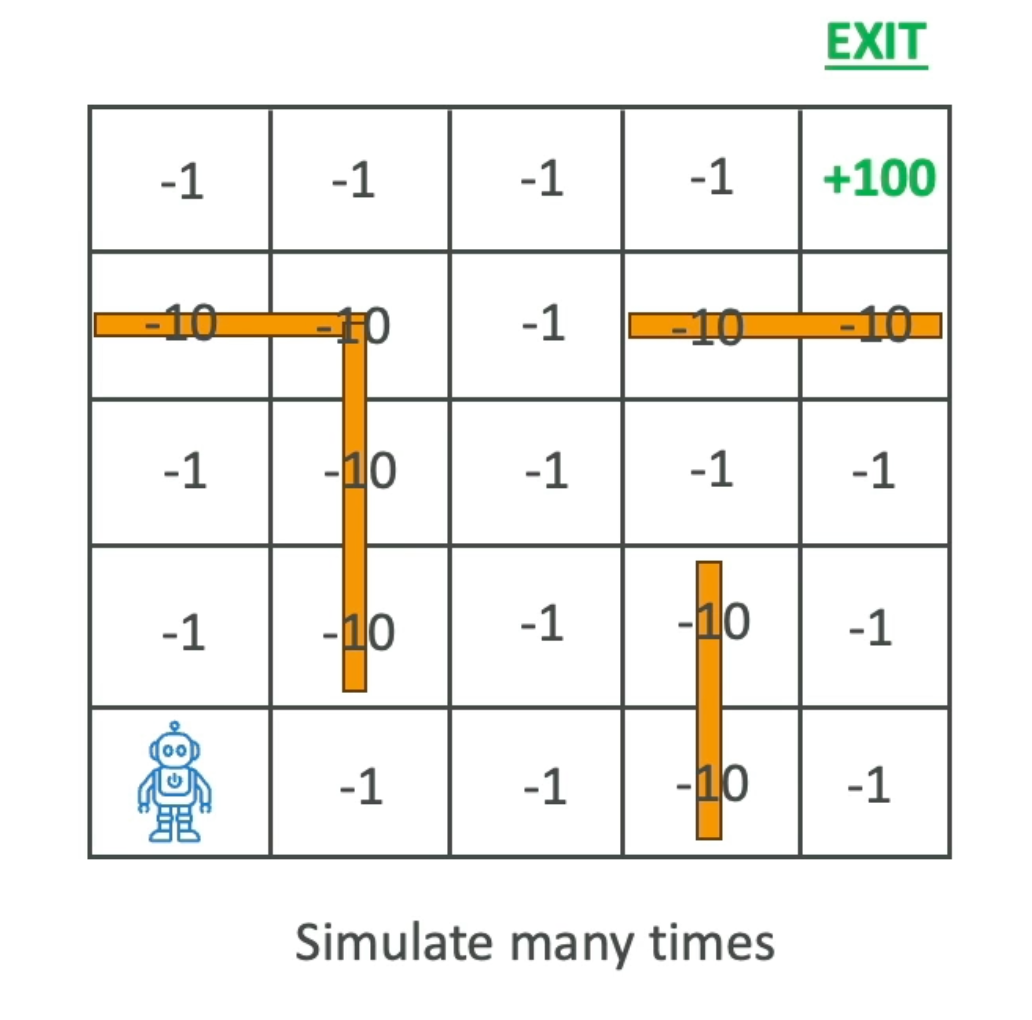

Example — Robot in a Maze

Scenario: Train a robot to escape a maze using the shortest path possible.

Reward structure:

- Move to a free cell → -1 point

- Hit a wall → -10 points

- Reach the exit → +100 points

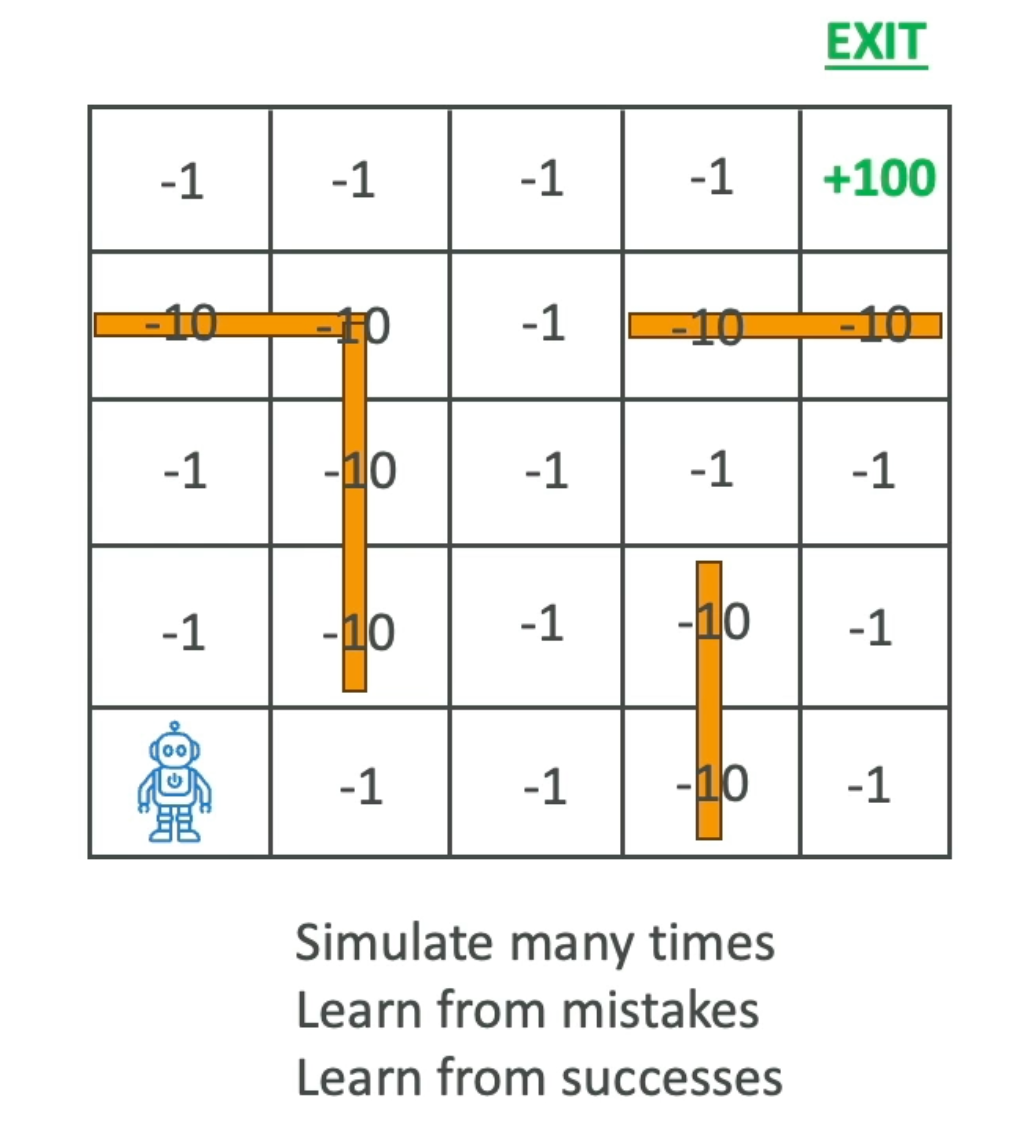

At the start, the robot has no knowledge of the maze and explores randomly, hitting walls and losing points. After simulating many times, it learns which paths cost fewer points and which lead to the exit.

Eventually the agent learns the optimal route — the one that reaches the exit with the minimum number of steps. It’s the equivalent of letting an AI play a video game hundreds of times: at first it loses constantly, but eventually it masters the game.

Context — Why It Matters for the Exam

RL is distinct from supervised and unsupervised learning in a fundamental way: there is no dataset. The model generates its own experience by interacting with an environment. This makes it well-suited for sequential decision problems where the right answer isn’t known in advance.

RLHF (Reinforcement Learning from Human Feedback) applies this same principle to fine-tune LLMs — human raters provide reward signals that teach the model to produce better responses.

Applications

- Gaming — AlphaGo, chess engines, video game AIs

- Robotics — navigation and object manipulation in dynamic environments

- Finance — portfolio management and trading strategies

- Healthcare — optimizing treatment plans

- Autonomous Vehicles — path planning and real-time decision-making

Related Concepts

- Machine Learning (ML)

- Supervised Learning

- Unsupervised Learning

- RLHF — Reinforcement Learning from Human Feedback

- Deep Learning (DL)

Exam Domain (AIF-C01)

Domain 1 — Fundamentals of AI and ML

- Task Statement 1.1: Types of ML — supervised, unsupervised, and reinforcement learning.